📌 TOPINDIATOURS Eksklusif ai: SpaceX Hit With Back to Back Lawsuits From Workers W

SpaceX is facing a one-two punch of personal injury lawsuits after two different workers have sued the Elon Musk-owned company this month over being injured on the job, the San Antonio Express-News reports, adding to the poor safety track record at its Starbase facility in South Texas.

The latest suit, filed in Cameron County on Monday, was leveled against SpaceX and the steel firm W&W Erectors LLC by a subcontractor named Julian Escalante, who was working on one of the launchpads used by Starship — the largest rocket in the world, whose development is under massive pressure for being behind schedule.

According to the suit, Escalante’s right arm got “entangled and pinched” by a metal bucket holding “approximately 200 pounds” of industrial-sized bolts after it tumbled from a pallet.

“As the bucket fell, (Escalante’s) right arm was dragged downward with (the bucket),” the suit said, per the Express-News “The downward force pulled (his) right shoulder, and (he) fell with the bucket as it hit the ground.”

Just as concerning as the accident, which took place in November, was his higher ups’ alleged response to it. When Escalante reported it to his supervisor, the supervisor told him “not to report the injury and instructed him to return to work,” the suit claims, and his foreman, Joe Pedroza, had basically the same advice: “Just don’t tell anyone.”

But Escalante wanted medical care for his injury. His inquiries into where management stood on his request allegedly didn’t fly well with the General Foreman, identified only as “Wero,” who told Escalante to “be a man” and “stop crying,” according to the suit. His lawyers say that SpaceX was negligent in maintaining a safe job site.

A similar lawsuit was filed earlier this month by a worker named Sergio Ortiz, the Express-News reported, who says that in 2024 he was struck by falling debris while working in an elevator shaft at Starbase. According to the suit, the heavy cables used by welding machines called welding leads, which can weigh up to 80 pounds, fell from above and slammed his head.

The suits are the latest to put the safety track record of SpaceX under the microscope. For years, it’s faced suits for accidents and even deaths, including a botched rocket test that left one employee in a permanent coma when a piece of one of Starship’s engines flew off and fractured his skull. A Reuters investigation in 2023 found at least 600 cases of workplace injuries at SpaceX that went unreported.

The company is under intense pressure to perfect Starship, which NASA has selected to perform a lunar landing in its upcoming Artemis III mission — a role that’s now under some doubt.

The company also allegedly has a track record of retaliating against employees. It has faced several lawsuits over sexual harassment, including some that implicated CEO Musk. In a 2024 lawsuit, eight former employees accused the company of illegally firing them after raising concerns over sexual harassment.

It’s not the only Musk-owned venture facing similar legal allegations, either. His automaker Tesla has also been hit with numerous lawsuits over brutal working conditions and workplace accidents, joined by widespread accounts of Musk impulsively firing employees in fits of rage, and allegedly threatening to deport an engineer for raising a critical safety issue.

More on SpaceX: Elon Musk’s Starship Explosion Endangered Hundreds of Airline Passengers

The post SpaceX Hit With Back to Back Lawsuits From Workers Who Say They Were Brutally Injured on the Job appeared first on Futurism.

🔗 Sumber: futurism.com

📌 TOPINDIATOURS Update ai: Adobe Research Unlocking Long-Term Memory in Video Worl

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

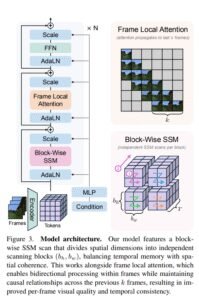

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

🤖 Catatan TOPINDIATOURS

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!