📌 TOPINDIATOURS Breaking ai: Video: New control system makes bipedal robots 81% mo

Humanoid robots are getting better at catching themselves before they fall.

Researchers at Georgia Tech have developed a real-time planning and control framework that significantly improves how two-legged robots recover from sudden disturbances while walking on uneven or moving terrain.

The system allows a bipedal robot to detect instability early and adjust its next steps before a fall happens.

Instead of relying on fixed movement patterns, the robot continuously evaluates whether its current motion plan will keep it stable. If not, it updates its next moves in real time.

The research addresses a major gap in robotics: recovery after unexpected directional shifts.

For example, if a robot is standing on a moving vehicle that suddenly turns, or if it encounters an obstacle mid-stride, it must quickly replan its motion to avoid collapse. Until now, recovery in such dynamic scenarios has been underexplored.

The team, led by Ye Zhao, director of the Georgia Tech Laboratory for Intelligent Decision and Autonomous Robots, and Ph.D. student Zhaoyuan Gu, designed a framework that combines formal logic rules with model predictive control.

The result is a structured decision-making system that helps robots react faster and more reliably under stress.

Smarter steps under stress

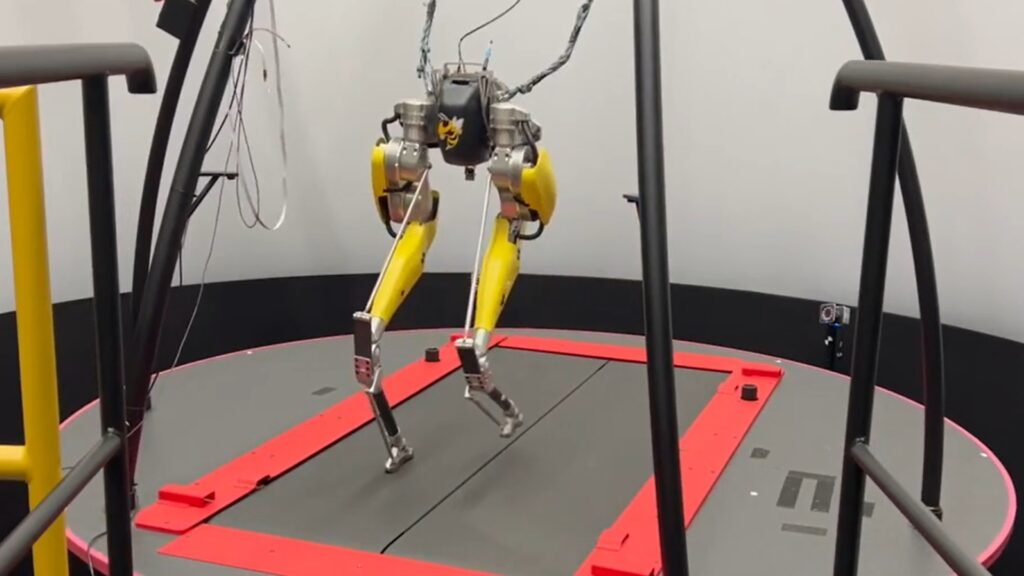

The framework was implemented on Cassie, a widely used two-legged research robot.

Testing took place inside Georgia Tech’s Human Augmentation Core Facility using a programmable treadmill system called CAREN, which can shift direction and speed unpredictably.

To further stress-test the robot, researchers added a BumpEm device that delivers stronger physical jolts.

Earlier experiments without the framework showed that bipedal robots struggled to identify stable recovery strategies and frequently fell.

With the new system in place, Cassie demonstrated faster decision-making, improved collision avoidance, and more confident stepping on moving platforms and varied terrain.

Overall, the researchers report an 81 percent increase in the robot’s ability to recover from instability.

Zhao said, “The results we got through this project are very impressive. They’re the most comprehensive and extensive hardware results we’ve published so far.”

The robot was not flawless. It performed less effectively when walking downhill, where maintaining balance requires riskier foot placement.

In one extreme test involving a wide step and cross-legged maneuver, Cassie failed to recover. Researchers noted that the narrow treadmill limited feasible recovery space.

Toward reliable humanoids

The team sees the work as foundational for deploying humanoid robots in real-world environments where terrain is unpredictable.

Marine settings are a particular focus, where maintenance tasks on ships can be hazardous for human workers. The system will eventually be tested at sea through the Office of Naval Research.

Gu emphasized the broader vision for bipedal machines.

“Humanoid robots are coming to your homes, coming to the factories, coming to logistics. They’re going to show up on the street. It’s exciting,” he said.

The researchers argue that advancing humanoid deployment requires more than mechanical design.

Stability algorithms and real-time intelligence are equally critical for safe human-robot interaction.

By formalizing how robots respond to disturbances, the framework offers a structured path toward safer autonomous locomotion.

The study was published in IEEE Transactions on Robotics.

🔗 Sumber: interestingengineering.com

📌 TOPINDIATOURS Breaking ai: MIT Researchers Unveil “SEAL”: A New Step Towards Sel

The concept of AI self-improvement has been a hot topic in recent research circles, with a flurry of papers emerging and prominent figures like OpenAI CEO Sam Altman weighing in on the future of self-evolving intelligent systems. Now, a new paper from MIT, titled “Self-Adapting Language Models,” introduces SEAL (Self-Adapting LLMs), a novel framework that allows large language models (LLMs) to update their own weights. This development is seen as another significant step towards the realization of truly self-evolving AI.

The research paper, published yesterday, has already ignited considerable discussion, including on Hacker News. SEAL proposes a method where an LLM can generate its own training data through “self-editing” and subsequently update its weights based on new inputs. Crucially, this self-editing process is learned via reinforcement learning, with the reward mechanism tied to the updated model’s downstream performance.

The timing of this paper is particularly notable given the recent surge in interest surrounding AI self-evolution. Earlier this month, several other research efforts garnered attention, including Sakana AI and the University of British Columbia’s “Darwin-Gödel Machine (DGM),” CMU’s “Self-Rewarding Training (SRT),” Shanghai Jiao Tong University’s “MM-UPT” framework for continuous self-improvement in multimodal large models, and the “UI-Genie” self-improvement framework from The Chinese University of Hong Kong in collaboration with vivo.

Adding to the buzz, OpenAI CEO Sam Altman recently shared his vision of a future with self-improving AI and robots in his blog post, “The Gentle Singularity.” He posited that while the initial millions of humanoid robots would need traditional manufacturing, they would then be able to “operate the entire supply chain to build more robots, which can in turn build more chip fabrication facilities, data centers, and so on.” This was quickly followed by a tweet from @VraserX, claiming an OpenAI insider revealed the company was already running recursively self-improving AI internally, a claim that sparked widespread debate about its veracity.

Regardless of the specifics of internal OpenAI developments, the MIT paper on SEAL provides concrete evidence of AI’s progression towards self-evolution.

Understanding SEAL: Self-Adapting Language Models

The core idea behind SEAL is to enable language models to improve themselves when encountering new data by generating their own synthetic data and optimizing their parameters through self-editing. The model’s training objective is to directly generate these self-edits (SEs) using data provided within the model’s context.

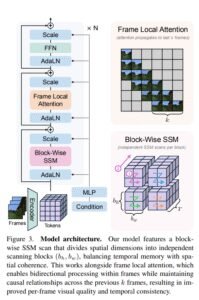

The generation of these self-edits is learned through reinforcement learning. The model is rewarded when the generated self-edits, once applied, lead to improved performance on the target task. Therefore, SEAL can be conceptualized as an algorithm with two nested loops: an outer reinforcement learning (RL) loop that optimizes the generation of self-edits, and an inner update loop that uses the generated self-edits to update the model via gradient descent.

This method can be viewed as an instance of meta-learning, where the focus is on how to generate effective self-edits in a meta-learning fashion.

A General Framework

SEAL operates on a single task instance (C,τ), where C is context information relevant to the task, and τ defines the downstream evaluation for assessing the model’s adaptation. For example, in a knowledge integration task, C might be a passage to be integrated into the model’s internal knowledge, and τ a set of questions about that passage.

Given C, the model generates a self-edit SE, which then updates its parameters through supervised fine-tuning: θ′←SFT(θ,SE). Reinforcement learning is used to optimize this self-edit generation: the model performs an action (generates SE), receives a reward r based on LMθ′’s performance on τ, and updates its policy to maximize the expected reward.

The researchers found that traditional online policy methods like GRPO and PPO led to unstable training. They ultimately opted for ReST^EM, a simpler, filtering-based behavioral cloning approach from a DeepMind paper. This method can be viewed as an Expectation-Maximization (EM) process, where the E-step samples candidate outputs from the current model policy, and the M-step reinforces only those samples that yield a positive reward through supervised fine-tuning.

The paper also notes that while the current implementation uses a single model to generate and learn from self-edits, these roles could be separated in a “teacher-student” setup.

Instantiating SEAL in Specific Domains

The MIT team instantiated SEAL in two specific domains: knowledge integration and few-shot learning.

- Knowledge Integration: The goal here is to effectively integrate information from articles into the model’s weights.

- Few-Shot Learning: This involves the model adapting to new tasks with very few examples.

Experimental Results

The experimental results for both few-shot learning and knowledge integration demonstrate the effectiveness of the SEAL framework.

In few-shot learning, using a Llama-3.2-1B-Instruct model, SEAL significantly improved adaptation success rates, achieving 72.5% compared to 20% for models using basic self-edits without RL training, and 0% without adaptation. While still below “Oracle TTT” (an idealized baseline), this indicates substantial progress.

For knowledge integration, using a larger Qwen2.5-7B model to integrate new facts from SQuAD articles, SEAL consistently outperformed baseline methods. Training with synthetically generated data from the base Qwen-2.5-7B model already showed notable improvements, and subsequent reinforcement learning further boosted performance. The accuracy also showed rapid improvement over external RL iterations, often surpassing setups using GPT-4.1 generated data within just two iterations.

Qualitative examples from the paper illustrate how reinforcement learning leads to the generation of more detailed self-edits, resulting in improved performance.

While promising, the researchers also acknowledge some limitations of the SEAL framework, including aspects related to catastrophic forgetting, computational overhead, and context-dependent evaluation. These are discussed in detail in the original paper.

Original Paper: https://arxiv.org/pdf/2506.10943

Project Site: https://jyopari.github.io/posts/seal

Github Repo: https://github.com/Continual-Intelligence/SEAL

The post MIT Researchers Unveil “SEAL”: A New Step Towards Self-Improving AI first appeared on Synced.

🔗 Sumber: syncedreview.com

🤖 Catatan TOPINDIATOURS

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!