📌 TOPINDIATOURS Eksklusif ai: Listen Labs raises $69M after viral billboard hiring

Alfred Wahlforss was running out of options. His startup, Listen Labs, needed to hire over 100 engineers, but competing against Mark Zuckerberg's $100 million offers seemed impossible. So he spent $5,000 — a fifth of his marketing budget — on a billboard in San Francisco displaying what looked like gibberish: five strings of random numbers.

The numbers were actually AI tokens. Decoded, they led to a coding challenge: build an algorithm to act as a digital bouncer at Berghain, the Berlin nightclub famous for rejecting nearly everyone at the door. Within days, thousands attempted the puzzle. 430 cracked it. Some got hired. The winner flew to Berlin, all expenses paid.

That unconventional approach has now attracted $69 million in Series B funding, led by Ribbit Capital with participation from Evantic and existing investors Sequoia Capital, Conviction, and Pear VC. The round values Listen Labs at $500 million and brings its total capital to $100 million. In nine months since launch, the company has grown annualized revenue by 15x to eight figures and conducted over one million AI-powered interviews.

"When you obsess over customers, everything else follows," Wahlforss said in an interview with VentureBeat. "Teams that use Listen bring the customer into every decision, from marketing to product, and when the customer is delighted, everyone is."

Why traditional market research is broken, and what Listen Labs is building to fix it

Listen's AI researcher finds participants, conducts in-depth interviews, and delivers actionable insights in hours, not weeks. The platform replaces the traditional choice between quantitative surveys — which provide statistical precision but miss nuance—and qualitative interviews, which deliver depth but cannot scale.

Wahlforss explained the limitation of existing approaches: "Essentially surveys give you false precision because people end up answering the same question… You can't get the outliers. People are actually not honest on surveys." The alternative, one-on-one human interviews, "gives you a lot of depth. You can ask follow up questions. You can kind of double check if they actually know what they're talking about. And the problem is you can't scale that."

The platform works in four steps: users create a study with AI assistance, Listen recruits participants from its global network of 30 million people, an AI moderator conducts in-depth interviews with follow-up questions, and results are packaged into executive-ready reports including key themes, highlight reels, and slide decks.

What distinguishes Listen's approach is its use of open-ended video conversations rather than multiple-choice forms. "In a survey, you can kind of guess what you should answer, and you have four options," Wahlforss said. "Oh, they probably want me to buy high income. Let me click on that button versus an open ended response. It just generates much more honesty."

The dirty secret of the $140 billion market research industry: rampant fraud

Listen finds and qualifies the right participants in its global network of 30 million people. But building that panel required confronting what Wahlforss called "one of the most shocking things that we've learned when we entered this industry"—rampant fraud.

"Essentially, there's a financial transaction involved, which means there will be bad players," he explained. "We actually had some of the largest companies, some of them have billions in revenue, send us people who claim to be kind of enterprise buyers to our platform and our system immediately detected, like, fraud, fraud, fraud, fraud, fraud."

The company built what it calls a "quality guard" that cross-references LinkedIn profiles with video responses to verify identity, checks consistency across how participants answer questions, and flags suspicious patterns. The result, according to Wahlforss: "People talk three times more. They're much more honest when they talk about sensitive topics like politics and mental health."

Emeritus, an online education company that uses Listen, reported that approximately 20% of survey responses previously fell into the fraudulent or low-quality category. With Listen, they reduced this to almost zero. "We did not have to replace any responses because of fraud or gibberish information," said Gabrielli Tiburi, Assistant Manager of Customer Insights at Emeritus.

How Microsoft, Sweetgreen, and Chubbies are using AI interviews to build better products

The speed advantage has proven central to Listen's pitch. Traditional customer research at Microsoft could take four to six weeks to generate insights. "By the time we get to them, either the decision has been made or we lose out on the opportunity to actually influence it," said Romani Patel, Senior Research Manager at Microsoft.

With Listen, Microsoft can now get insights in days, and in many cases, within hours.

The platform has already powered several high-profile initiatives. Microsoft used Listen Labs to collect global customer stories for its 50th anniversary celebration. "We wanted users to share how Copilot is empowering them to bring their best self forward," Patel said, "and we were able to collect those user video stories within a day." Traditionally, that kind of work would have taken six to eight weeks.

Simple Modern, an Oklahoma-based drinkware company, used Listen to test a new product concept. The process took about an hour to write questions, an hour to launch the study, and 2.5 hours to receive feedback from 120 people across the country. "We went from 'Should we even have this product?' to 'How should we launch it?'" said Chris Hoyle, the company's Chief Marketing Officer.

Chubbies, the shorts brand, achieved a 24x increase in youth research participation—growing from 5 to 120 participants — by using Listen to overcome the scheduling challenges of traditional focus groups with children. "There's school, sports, dinner, and homework," explained Lauren Neville, Director of Insights and Innovation. "I had to find a way to hear from them that fit into their schedules."

The company also discovered product issues through AI interviews that might have gone undetected otherwise. Wahlforss described how the AI "through conversations, realized there were like issues with the the kids short line, and decided to, like, interview hundreds of kids. And I understand that there were issues in the liner of the shorts and that they were, like, scratchy, quote, unquote, according to the people interviewed." The redesigned product became "a blockbuster hit."

The Jevons paradox explains why cheaper research creates more demand, not less

Listen Labs is entering a massive but fragmented market. Wahlforss cited research from Andreessen Horowitz estimating the market research ind…

Konten dipersingkat otomatis.

🔗 Sumber: venturebeat.com

📌 TOPINDIATOURS Update ai: ByteDance Introduces Astra: A Dual-Model Architecture f

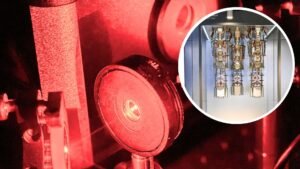

The increasing integration of robots across various sectors, from industrial manufacturing to daily life, highlights a growing need for advanced navigation systems. However, contemporary robot navigation systems face significant challenges in diverse and complex indoor environments, exposing the limitations of traditional approaches. Addressing the fundamental questions of “Where am I?”, “Where am I going?”, and “How do I get there?”, ByteDance has developed Astra, an innovative dual-model architecture designed to overcome these traditional navigation bottlenecks and enable general-purpose mobile robots.

Traditional navigation systems typically consist of multiple, smaller, and often rule-based modules to handle the core challenges of target localization, self-localization, and path planning. Target localization involves understanding natural language or image cues to pinpoint a destination on a map. Self-localization requires a robot to determine its precise position within a map, especially challenging in repetitive environments like warehouses where traditional methods often rely on artificial landmarks (e.g., QR codes). Path planning further divides into global planning for rough route generation and local planning for real-time obstacle avoidance and reaching intermediate waypoints.

While foundation models have shown promise in integrating smaller models to tackle broader tasks, the optimal number of models and their effective integration for comprehensive navigation remained an open question.

ByteDance’s Astra, detailed in their paper “Astra: Toward General-Purpose Mobile Robots via Hierarchical Multimodal Learning” (website: https://astra-mobility.github.io/), addresses these limitations. Following the System 1/System 2 paradigm, Astra features two primary sub-models: Astra-Global and Astra-Local. Astra-Global handles low-frequency tasks like target and self-localization, while Astra-Local manages high-frequency tasks such as local path planning and odometry estimation. This architecture promises to revolutionize how robots navigate complex indoor spaces.

Astra-Global: The Intelligent Brain for Global Localization

Astra-Global serves as the intelligent core of the Astra architecture, responsible for critical low-frequency tasks: self-localization and target localization. It functions as a Multimodal Large Language Model (MLLM), adept at processing both visual and linguistic inputs to achieve precise global positioning within a map. Its strength lies in utilizing a hybrid topological-semantic graph as contextual input, allowing the model to accurately locate positions based on query images or text prompts.

The construction of this robust localization system begins with offline mapping. The research team developed an offline method to build a hybrid topological-semantic graph G=(V,E,L):

- V (Nodes): Keyframes, obtained by temporal downsampling of input video and SfM-estimated 6-Degrees-of-Freedom (DoF) camera poses, act as nodes encoding camera poses and landmark references.

- E (Edges): Undirected edges establish connectivity based on relative node poses, crucial for global path planning.

- L (Landmarks): Semantic landmark information is extracted by Astra-Global from visual data at each node, enriching the map’s semantic understanding. These landmarks store semantic attributes and are connected to multiple nodes via co-visibility relationships.

In practical localization, Astra-Global’s self-localization and target localization capabilities leverage a coarse-to-fine two-stage process for visual-language localization. The coarse stage analyzes input images and localization prompts, detects landmarks, establishes correspondence with a pre-built landmark map, and filters candidates based on visual consistency. The fine stage then uses the query image and coarse output to sample reference map nodes from the offline map, comparing their visual and positional information to directly output the predicted pose.

For language-based target localization, the model interprets natural language instructions, identifies relevant landmarks using their functional descriptions within the map, and then leverages landmark-to-node association mechanisms to locate relevant nodes, retrieving target images and 6-DoF poses.

To empower Astra-Global with robust localization abilities, the team employed a meticulous training methodology. Using Qwen2.5-VL as the backbone, they combined Supervised Fine-Tuning (SFT) with Group Relative Policy Optimization (GRPO). SFT involved diverse datasets for various tasks, including coarse and fine localization, co-visibility detection, and motion trend estimation. In the GRPO phase, a rule-based reward function (including format, landmark extraction, map matching, and extra landmark rewards) was used to train for visual-language localization. Experiments showed GRPO significantly improved Astra-Global’s zero-shot generalization, achieving 99.9% localization accuracy in unseen home environments, surpassing SFT-only methods.

Astra-Local: The Intelligent Assistant for Local Planning

Astra-Local acts as the intelligent assistant for Astra’s high-frequency tasks, a multi-task network capable of efficiently generating local paths and accurately estimating odometry from sensor data. Its architecture comprises three core components: a 4D spatio-temporal encoder, a planning head, and an odometry head.

The 4D spatio-temporal encoder replaces traditional mobile stack perception and prediction modules. It begins with a 3D spatial encoder that processes N omnidirectional images through a Vision Transformer (ViT) and Lift-Splat-Shoot to convert 2D image features into 3D voxel features. This 3D encoder is trained using self-supervised learning via 3D volumetric differentiable neural rendering. The 4D spatio-temporal encoder then builds upon the 3D encoder, taking past voxel features and future timestamps as input to predict future voxel features through ResNet and DiT modules, providing current and future environmental representations for planning and odometry.

The planning head, based on pre-trained 4D features, robot speed, and task information, generates executable trajectories using Transformer-based flow matching. To prevent collisions, the planning head incorporates a masked ESDF loss (Euclidean Signed Distance Field). This loss calculates the ESDF of a 3D occupancy map and applies a 2D ground truth trajectory mask, significantly reducing collision rates. Experiments demonstrate its superior performance in collision rate and overall score on out-of-distribution (OOD) datasets compared to other methods.

The odometry head predicts the robot’s relative pose using current and past 4D features and additional sensor data (e.g., IMU, wheel data). It trains a Transformer model to fuse information from different sensors. Each sensor modality is processed by a specific tokenizer, combined with modality embeddings and temporal positional embeddi…

Konten dipersingkat otomatis.

🔗 Sumber: syncedreview.com

🤖 Catatan TOPINDIATOURS

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!