📌 TOPINDIATOURS Eksklusif ai: Nanoscale particle squeezed past quantum noise in ph

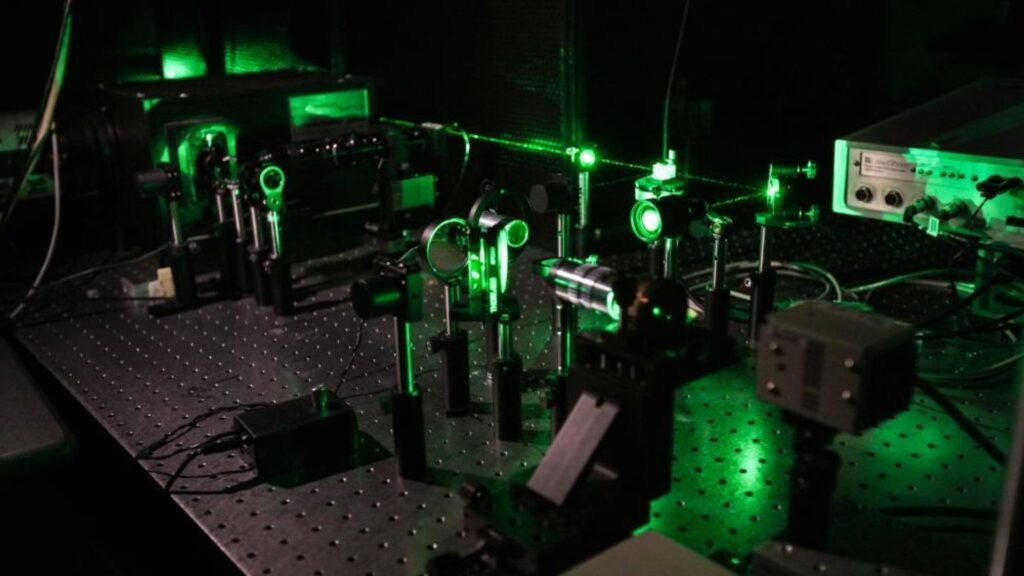

Researchers at the University of Tokyo have pulled off a breakthrough that pushes the boundaries of quantum physics into new territory.

The team successfully demonstrated quantum squeezing of the motion of a nanoscale particle, a motion whose uncertainty is smaller than the quantum mechanical fluctuations usually considered the ultimate limit.

The achievement could open doors not only for probing fundamental physics but also for creating ultra-precise sensors.

These sensors may one day enable technologies such as GPS-free navigation and autonomous driving that operate with pinpoint accuracy.

At the heart of the discovery lies one of quantum mechanics’ strangest features — uncertainty. In the microscopic world, the position and velocity of a particle cannot be measured with absolute precision because they are always subject to fluctuations.

Even in the lowest possible energy state, a particle still experiences “zero-point fluctuations.”

Quantum squeezing reduces this uncertainty, creating a state narrower than nature’s usual quantum limit.

By extending this concept to a nanoscale particle, the Tokyo team has created a new platform to explore how quantum laws apply at scales larger than atoms but still far smaller than everyday objects.

A particle trapped between two worlds

Principal investigator Kiyotaka Aikawa explains the challenge: “Although quantum mechanics has been successful with microscopic particles, such as photons and atoms, it has not been explored to what extent quantum mechanics is correct at macroscopic scales.”

The team needed an object large enough to bridge the gap to test this. They turned to a glass particle at the nanoscale, levitating it in a vacuum where it was cooled to near its lowest possible energy level.

By carefully adjusting the conditions of its trap and then releasing it briefly, the researchers could measure its velocity distribution.

The key moment came when they found that, under the right timing, the velocity distribution was narrower than the uncertainty expected at the particle’s ground state. This narrowing was the unmistakable sign of quantum squeezing.

Engineering the leap to quantum devices

The journey was anything but easy. Levitating particles are notoriously unstable, and the environment added further noise and fluctuations. The researchers spent years overcoming these issues before finding a condition that worked consistently.

“When we found a condition that could be reliably reproduced,” says Aikawa, “we were surprised how sensitive the levitated nanoscale particle was to the fluctuations of its environment.”

This delicate balance is precisely what makes the platform so powerful.

A levitated nanoscale particle in vacuum represents an isolated system where researchers can study the transition between classical and quantum mechanics. It also offers a testbed for building new quantum devices.

Beyond pure science, the implications are practical. Ultra-sensitive quantum sensors developed from this principle could revolutionize navigation by providing accuracy independent of satellite signals.

They might also enhance measurements in fields as diverse as medicine, geology, and communications.

For now, the Tokyo team is celebrating its success. But their work marks only the beginning of a larger journey, one where quantum mechanics edges ever closer to the macroscopic world we inhabit.

The findings of the study have been recently published in the journal Science.

🔗 Sumber: interestingengineering.com

📌 TOPINDIATOURS Update ai: Researchers from PSU and Duke introduce “Multi-Agent Sy

Share My Research is Synced’s column that welcomes scholars to share their own research breakthroughs with over 2M global AI enthusiasts. Beyond technological advances, Share My Research also calls for interesting stories behind the research and exciting research ideas.

Meet the author

Institutions: Penn State University, Duke University, Google DeepMind, University of Washington, Meta, Nanyang Technological University, and Oregon State University. The co-first authors are Shaokun Zhang of Penn State University and Ming Yin of Duke University.

In recent years, LLM Multi-Agent systems have garnered widespread attention for their collaborative approach to solving complex problems. However, it’s a common scenario for these systems to fail at a task despite a flurry of activity. This leaves developers with a critical question: which agent, at what point, was responsible for the failure? Sifting through vast interaction logs to pinpoint the root cause feels like finding a needle in a haystack—a time-consuming and labor-intensive effort.

This is a familiar frustration for developers. In increasingly complex Multi-Agent systems, failures are not only common but also incredibly difficult to diagnose due to the autonomous nature of agent collaboration and long information chains. Without a way to quickly identify the source of a failure, system iteration and optimization grind to a halt.

To address this challenge, researchers from Penn State University and Duke University, in collaboration with institutions including Google DeepMind, have introduced the novel research problem of “Automated Failure Attribution.” They have constructed the first benchmark dataset for this task, Who&When, and have developed and evaluated several automated attribution methods. This work not only highlights the complexity of the task but also paves a new path toward enhancing the reliability of LLM Multi-Agent systems.

The paper has been accepted as a Spotlight presentation at the top-tier machine learning conference, ICML 2025, and the code and dataset are now fully open-source.

Paper:https://arxiv.org/pdf/2505.00212

Code:https://github.com/mingyin1/Agents_Failure_Attribution

Dataset:https://huggingface.co/datasets/Kevin355/Who_and_When

Research Background and Challenges

LLM-driven Multi-Agent systems have demonstrated immense potential across many domains. However, these systems are fragile; errors by a single agent, misunderstandings between agents, or mistakes in information transmission can lead to the failure of the entire task.

Currently, when a system fails, developers are often left with manual and inefficient methods for debugging:

Manual Log Archaeology : Developers must manually review lengthy interaction logs to find the source of the problem.

Reliance on Expertise : The debugging process is highly dependent on the developer’s deep understanding of the system and the task at hand.

This “needle in a haystack” approach to debugging is not only inefficient but also severely hinders rapid system iteration and the improvement of system reliability. There is an urgent need for an automated, systematic method to pinpoint the cause of failures, effectively bridging the gap between “evaluation results” and “system improvement.”

Core Contributions

This paper makes several groundbreaking contributions to address the challenges above:

1. Defining a New Problem: The paper is the first to formalize “automated failure attribution” as a specific research task. This task is defined by identifying the

2. failure-responsible agent and the decisive error step that led to the task’s failure.

Constructing the First Benchmark Dataset: Who&When : This dataset includes a wide range of failure logs collected from 127 LLM Multi-Agent systems, which were either algorithmically generated or hand-crafted by experts to ensure realism and diversity. Each failure log is accompanied by fine-grained human annotations for:

Who: The agent responsible for the failure.

When: The specific interaction step where the decisive error occurred.

Why: A natural language explanation of the cause of the failure.

3. Exploring Initial “Automated Attribution” Methods : Using the Who&When dataset, the paper designs and assesses three distinct methods for automated failure attribution:

All-at-Once: This method provides the LLM with the user query and the complete failure log, asking it to identify the responsible agent and the decisive error step in a single pass. While cost-effective, it may struggle to pinpoint precise errors in long contexts.

Step-by-Step: This approach mimics manual debugging by having the LLM review the interaction log sequentially, making a judgment at each step until the error is found. It is more precise at locating the error step but incurs higher costs and risks accumulating errors.

Binary Search: A compromise between the first two methods, this strategy repeatedly divides the log in half, using the LLM to determine which segment contains the error. It then recursively searches the identified segment, offering a balance of cost and performance.

Experimental Results and Key Findings

Experiments were conducted in two settings: one where the LLM knows the ground truth answer to the problem the Multi-Agent system is trying to solve (With Ground Truth) and one where it does not (Without Ground Truth). The primary model used was GPT-4o, though other models were also tested. The systematic evaluation of these methods on the Who&When dataset yielded several important insights:

- A Long Way to Go: Current methods are far from perfect. Even the best-performing single method achieved an accuracy of only about 53.5% in identifying the responsible agent and a mere 14.2% in pinpointing the exact error step. Some methods performed even worse than random guessing, underscoring the difficulty of the task.

- No “All-in-One” Solution: Different methods excel at different aspects of the problem. The All-at-Once method is better at identifying “Who,” while the Step-by-Step method is more effective at determining “When.” The Binary Search method provides a middle-ground performance.

- Hybrid Approaches Show Promise but at a High Cost: The researchers found that combining different methods, such as using the All-at-Once approach to identify a potential agent and then applying the Step-by-Step method to find the error, can improve overall performance. However, this comes with a significant increase in computational cost.

- State-of-the-Art Models Struggle: Surprisingly, even the most advanced reasoning m…

Konten dipersingkat otomatis.

🔗 Sumber: syncedreview.com

🤖 Catatan TOPINDIATOURS

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!