📌 TOPINDIATOURS Hot ai: China’s new RNA mapping technique could potentially analyz

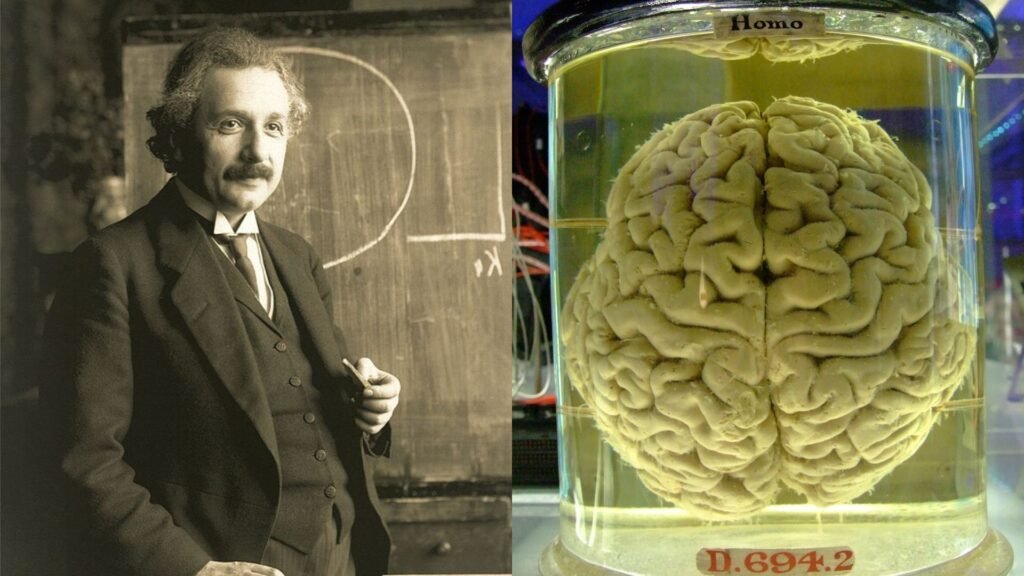

Could the preserved brain of Albert Einstein, sliced into 240 blocks and stored since his death in 1955, hold clues to the cellular basis of genius?

Could long-frozen cancer tissues, tucked away in hospital archives for nearly a decade, now reveal secrets of tumor evolution?

Chinese scientists say their upgraded RNA-mapping technique could one day make such a study possible.

A team from BGI-Research and partner institutes has developed Stereo-seq V2, an advanced spatial transcriptomics tool that can analyze old biological samples once considered unusable.

The method successfully processed cancer tissues stored under poor conditions for nearly a decade, raising hopes that long-preserved samples could be mined for new scientific insights.

“If we are fortunate enough to analyse Einstein’s brain, we could give it a try,” Li Yang, a research associate at BGI-Research, told the South China Morning Post.

“But the challenges are significant because the preservation techniques at that time may not have been very good. It is hard to say.”

Einstein’s brain was preserved using mid-20th-century methods, long before advances in genetic storage, making its viability uncertain.

Still, the Stereo-seq V2 breakthrough signals a major step toward unlocking valuable information from old clinical archives.

Breathing life into history

Traditional biospecimens are kept in formalin-fixed, paraffin-embedded (FFPE) blocks—cost-effective and stable for decades, but often chemically damaged. That degradation limited DNA and RNA analysis, which is crucial because DNA stores genetic information while RNA turns it into proteins.

Stereo-seq V2 overcomes those barriers by improving RNA capture efficiency and using random-primed chemistry to achieve full gene-body coverage, even in degraded samples.

In trials, the tool mapped RNA at single-cell resolution, identifying tumor subtypes and immune responses within old cancer tissue.

Co-corresponding author Liao Sha, chief technology officer of STOmics, said the team carefully screens samples before use. “If the samples had degraded too much, we would not be able to analyse them effectively,” she said.

Beyond oncology, the platform has already demonstrated its ability to simultaneously profile both host and microbial RNAs in tuberculosis studies, offering insights into how pathogens interact with immune systems over time.

Expanding a precious archive

Hospitals worldwide store millions of FFPE samples, often for 20 years or more. Until now, much of that genetic information was locked away by preservation damage. Li said the new method expands the pool of research material available for rare diseases.

“Many rare diseases require a long time to accumulate samples,” Li said. “Now, we can effectively utilise precious samples that have been preserved over the long term.”

Researchers believe the approach could lead to earlier diagnostics, more personalized cancer treatments, and retrospective studies on archived specimens.

Hospitals may also explore building joint laboratories to process samples in-house, reducing risks from external transfers.

While the prospect of decoding Einstein’s genius remains uncertain, the real-world applications of Stereo-seq V2 are immediate, offering scientists sharper tools to explore the biology of cancer, infection, and rare diseases locked away in tissue archives.

The study was published last month in the journal Cell.

🔗 Sumber: interestingengineering.com

📌 TOPINDIATOURS Hot ai: Adobe Research Unlocking Long-Term Memory in Video World M

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

🤖 Catatan TOPINDIATOURS

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!