📌 TOPINDIATOURS Hot ai: 3x power boost: Microsoft launches Maia 200 to run AI infe

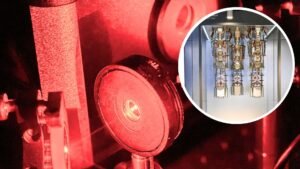

Microsoft has introduced the Maia 200, its second-generation in-house AI chip, as competition intensifies around the cost of running large models.

Unlike earlier hardware pushes that focused on training, the new chip targets inference, the continuous process of serving AI responses to users.

Inference has become a growing expense for AI companies. As chatbots and copilots scale to millions of users, models must run nonstop. Microsoft says Maia 200 is designed for that shift.

The chip comes online this week at a Microsoft data center in Iowa. A second deployment is planned for Arizona.

Designed for inference scale

Maia 200 builds on the Maia 100, which Microsoft launched in 2023. The new version delivers a major performance jump. Microsoft says the chip packs more than 100 billion transistors and produces over 10 petaflops of compute at 4-bit precision. At 8-bit precision, it reaches roughly 5 petaflops.

Those figures target real-world workloads rather than training benchmarks. Inference demands speed, stability, and power efficiency. Microsoft says a single Maia 200 node can run today’s largest AI models while leaving room for future growth.

The chip’s design reflects how modern AI services operate. Chatbots must respond quickly even when user traffic spikes.

To handle that demand, Maia 200 includes a large amount of SRAM, a fast memory type that reduces delays during repeated queries.

Several newer AI hardware players rely on memory-heavy designs. Microsoft appears to have adopted that approach to improve responsiveness at scale.

Maia 200 also serves a strategic purpose. Major cloud providers reportedly want to reduce their reliance on NVIDIA, whose GPUs dominate AI infrastructure. While NVIDIA still leads in performance, its hardware and software stack shapes pricing and availability across the industry.

Google already offers its tensor processing units through its cloud. Amazon Web Services promotes its Trainium and Inferentia chips. Microsoft now joins that group with Maia.

The company made direct comparisons. Microsoft says Maia 200 delivers three times the FP4 performance of Amazon’s third-generation Trainium chips.

It also claims stronger FP8 performance than Google’s latest TPU.

Like NVIDIA’s upcoming Vera Rubin processors, Maia 200 is manufactured by Taiwan Semiconductor Manufacturing Co using 3-nanometer technology.

It also uses high-bandwidth memory, though an older generation than NVIDIA’s next chips.

Software closes the gap

Microsoft paired the chip launch with new developer tools. The company aims to narrow a gap that has long favored NVIDIA software.

One key tool is Triton, an open-source framework that helps developers write efficient AI code. OpenAI has made major contributions to the project.

Microsoft positions Triton as an alternative to CUDA, NVIDIA’s dominant programming platform.

Maia 200 already runs inside Microsoft’s own AI services. The company says it supports models from its Superintelligence team and helps power Copilot.

Microsoft has also invited developers, academics, and frontier AI labs to test the Maia 200 software development kit.

With Maia 200, Microsoft signals a broader shift in AI infrastructure. Faster chips still matter. Control over software and deployment now matters just as much.

🔗 Sumber: interestingengineering.com

📌 TOPINDIATOURS Update ai: Which Agent Causes Task Failures and When?Researchers f

Share My Research is Synced’s column that welcomes scholars to share their own research breakthroughs with over 1.5M global AI enthusiasts. Beyond technological advances, Share My Research also calls for interesting stories behind the research and exciting research ideas. Contact us: chain.zhang@jiqizhixin.com

Meet the authors

Institutions: Penn State University, Duke University, Google DeepMind, University of Washington, Meta, Nanyang Technological University, and Oregon State University. The co-first authors are Shaokun Zhang of Penn State University and Ming Yin of Duke University.

In recent years, LLM Multi-Agent systems have garnered widespread attention for their collaborative approach to solving complex problems. However, it’s a common scenario for these systems to fail at a task despite a flurry of activity. This leaves developers with a critical question: which agent, at what point, was responsible for the failure? Sifting through vast interaction logs to pinpoint the root cause feels like finding a needle in a haystack—a time-consuming and labor-intensive effort.

This is a familiar frustration for developers. In increasingly complex Multi-Agent systems, failures are not only common but also incredibly difficult to diagnose due to the autonomous nature of agent collaboration and long information chains. Without a way to quickly identify the source of a failure, system iteration and optimization grind to a halt.

To address this challenge, researchers from Penn State University and Duke University, in collaboration with institutions including Google DeepMind, have introduced the novel research problem of “Automated Failure Attribution.” They have constructed the first benchmark dataset for this task, Who&When, and have developed and evaluated several automated attribution methods. This work not only highlights the complexity of the task but also paves a new path toward enhancing the reliability of LLM Multi-Agent systems.

The paper has been accepted as a Spotlight presentation at the top-tier machine learning conference, ICML 2025, and the code and dataset are now fully open-source.

Paper:https://arxiv.org/pdf/2505.00212

Code:https://github.com/mingyin1/Agents_Failure_Attribution

Dataset:https://huggingface.co/datasets/Kevin355/Who_and_When

Research Background and Challenges

LLM-driven Multi-Agent systems have demonstrated immense potential across many domains. However, these systems are fragile; errors by a single agent, misunderstandings between agents, or mistakes in information transmission can lead to the failure of the entire task.

Currently, when a system fails, developers are often left with manual and inefficient methods for debugging:

Manual Log Archaeology : Developers must manually review lengthy interaction logs to find the source of the problem.

Reliance on Expertise : The debugging process is highly dependent on the developer’s deep understanding of the system and the task at hand.

This “needle in a haystack” approach to debugging is not only inefficient but also severely hinders rapid system iteration and the improvement of system reliability. There is an urgent need for an automated, systematic method to pinpoint the cause of failures, effectively bridging the gap between “evaluation results” and “system improvement.”

Core Contributions

This paper makes several groundbreaking contributions to address the challenges above:

1. Defining a New Problem: The paper is the first to formalize “automated failure attribution” as a specific research task. This task is defined by identifying the failure-responsible agent and the decisive error step that led to the task’s failure.

2. Constructing the First Benchmark Dataset: Who&When : This dataset includes a wide range of failure logs collected from 127 LLM Multi-Agent systems, which were either algorithmically generated or hand-crafted by experts to ensure realism and diversity. Each failure log is accompanied by fine-grained human annotations for:

Who: The agent responsible for the failure.

When: The specific interaction step where the decisive error occurred.

Why: A natural language explanation of the cause of the failure.

3. Exploring Initial “Automated Attribution” Methods : Using the Who&When dataset, the paper designs and assesses three distinct methods for automated failure attribution:

– All-at-Once: This method provides the LLM with the user query and the complete failure log, asking it to identify the responsible agent and the decisive error step in a single pass. While cost-effective, it may struggle to pinpoint precise errors in long contexts.

– Step-by-Step: This approach mimics manual debugging by having the LLM review the interaction log sequentially, making a judgment at each step until the error is found. It is more precise at locating the error step but incurs higher costs and risks accumulating errors.

– Binary Search: A compromise between the first two methods, this strategy repeatedly divides the log in half, using the LLM to determine which segment contains the error. It then recursively searches the identified segment, offering a balance of cost and performance.

Experimental Results and Key Findings

Experiments were conducted in two settings: one where the LLM knows the ground truth answer to the problem the Multi-Agent system is trying to solve (With Ground Truth) and one where it does not (Without Ground Truth). The primary model used was GPT-4o, though other models were also tested. The systematic evaluation of these methods on the Who&When dataset yielded several important insights:

– A Long Way to Go: Current methods are far from perfect. Even the best-performing single method achieved an accuracy of only about 53.5% in identifying the responsible agent and a mere 14.2% in pinpointing the exact error step. Some methods performed even worse than random guessing, underscoring the difficulty of the task.

– No “All-in-One” Solution: Different methods excel at different aspects of the problem. The All-at-Once method is better at identifying “Who,” while the Step-by-Step method is more effective at determining “When.” The Binary Search method provides a middle-ground performance.

– Hybrid Approaches Show Promise but at a High Cost: The researchers found that combining different methods, such as using the All-at-Once approach to identify a potential agent and then applying the Step-by-Step method to find the error, can improve overall performance. However, this comes with a significant increase in computational cost.

– State-of-the-Art Models Struggle: Surprisingly, even the most advanced reasoning models, like OpenAI o1 and DeepSeek R1, find this task challenging.- This h…

Konten dipersingkat otomatis.

🔗 Sumber: syncedreview.com

🤖 Catatan TOPINDIATOURS

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!