📌 TOPINDIATOURS Breaking ai: Samsung hikes DDR5 memory prices over 100%, tightenin

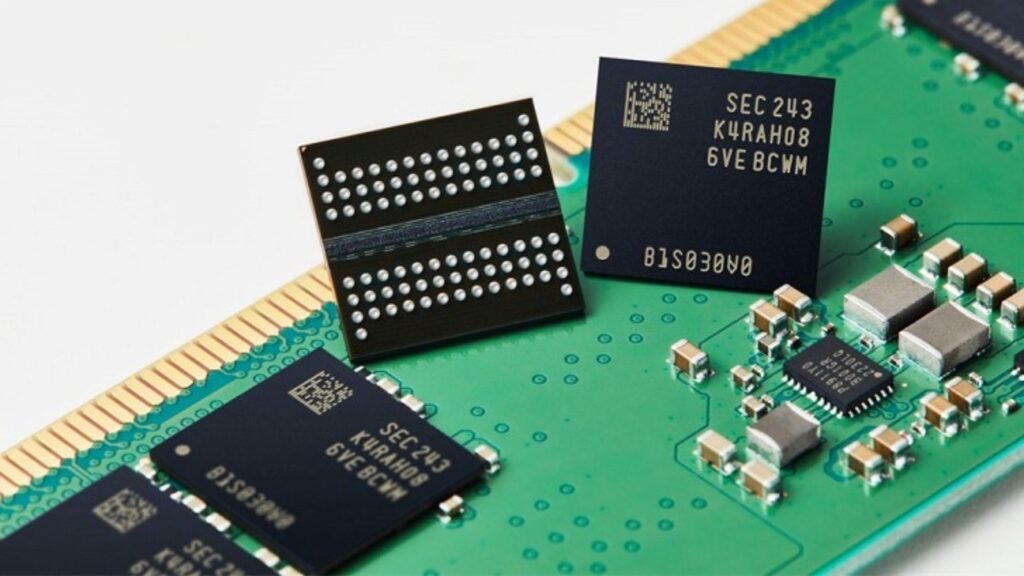

Samsung has reportedly doubled the contract price of DDR5 memory, pushing costs to levels not seen before.

According to industry tracker Jukan on X, Samsung raised DDR5 contract prices by more than 100 percent, lifting them to nearly $20 per unit.

The move follows what Samsung reportedly told “downstream customers” was a severe supply crunch, saying there is “no stock!”.

The sudden increase suggests inflated DRAM pricing may persist well into 2026.

OEMs that buy memory at scale now face sharply higher costs, which they are expected to pass on to consumers across phones, laptops, and PCs.

DDR prices surge

The price shock is not limited to DDR5. Contract pricing for 16 GB of DDR4 DRAM has also jumped sharply, reaching around $18.

That rise removes DDR4 as a temporary cost-saving option for manufacturers.

Taiwanese media reports indicate that spot market prices have climbed even faster than contract prices.

December saw spot DDR5 prices rise further, despite expectations of easing supply.

DDR4 pricing also continues to climb, with “no signs of resting”.

These increases arrive as memory makers prioritize higher-margin products and data center demand.

Consumer device manufacturers now face growing pressure on their bills of materials.

TrendForce expects the situation to worsen. In a recent report, the firm warned memory prices could rise “sharply again” in Q1 2026.

The firm said this would exert “significant cost pressure on global end-device manufacturers”.

Memory now accounts for a larger share of total component costs. That shift leaves OEMs with limited room to absorb increases.

Specs face cuts

Rising DRAM prices already influence product planning. Smartphone makers are now reconsidering memory configurations for future devices.

TrendForce says manufacturers may reintroduce lower memory tiers to control costs.

Base models could return to 4GB RAM in 2026.

That level once defined entry-tier phones but had largely disappeared.

Mid-range and even higher-end smartphones may also see tighter memory allocations.

These cuts could slow upgrade cycles and reduce perceived value for consumers.

Some brands may revive expandable storage. Reports point to a possible return of microSD card slots as a way to offset smaller internal memory.

Apple may also feel the impact. Despite strong margins, analysts expect memory to “significantly increase” as a share of the iPhone bill of materials in early 2026.

That shift could force Apple to reassess pricing strategies. The company may rethink price cuts on older models.

Android vendors face tougher choices. Memory acts as a key marketing differentiator in the mid-to-low-end segments.

Higher DRAM costs could push launch prices higher.

Brands may also adjust the lifecycles of existing models to limit losses.

PC price pressure

PC makers also prepare for cost shocks. TrendForce notes that notebook makers must adjust product portfolios and procurement strategies.

Ultra-thin laptops face the greatest risk. These designs use soldered memory and limit cost-saving options.

Commercial PC buyers may feel the impact first.

TrendForce reports that Dell plans price increases ranging from 10% to 30% on commercial PCs starting December 17, driven by rising memory prices.

Consumer notebooks may hold steady for now. Existing inventory and lower-cost memory offer short-term protection.

TrendForce warns that medium- and long-term changes remain unavoidable.

More volatility could hit the PC market by Q2 2026. That timing aligns with Computex 2026, when manufacturers typically refresh lineups.

For consumers, the message is clear. Higher prices and lower specs may define the next hardware cycle.

🔗 Sumber: interestingengineering.com

📌 TOPINDIATOURS Hot ai: Adobe Research Unlocking Long-Term Memory in Video World M

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

🤖 Catatan TOPINDIATOURS

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!